Running Extractions

A run is an extraction job. You select a robot, optionally provide start URLs and/or extraction budget, and Extralt crawls the site to produce captures.

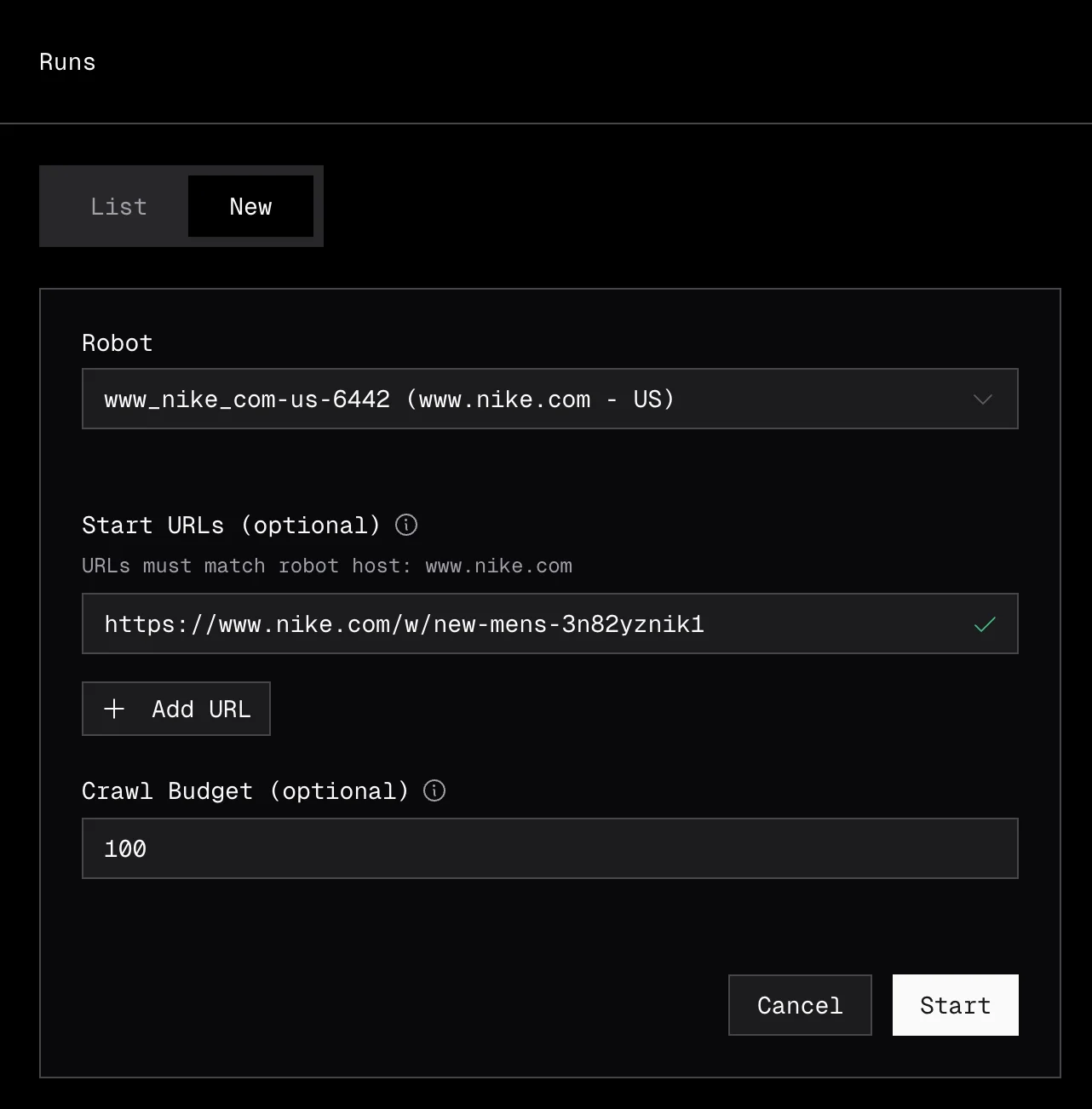

Creating a run

• Dashboard

Navigate to Extract > Runs and click New Run, or click Start Run on any robot in the robots list.

Select the robot to use, enter your start URLs (one per line), and optionally set a budget to limit how many URLs the robot will extract. Click Start to begin the run.

• API

export EXTRALT_API_KEY="your-api-key"

curl -s -X POST "https://api.extralt.com/runs" \

-H "Authorization: Bearer $EXTRALT_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"robotId": "your-robot-id",

"urls": [

"https://example-store.com/products/sneakers",

"https://example-store.com/products/boots"

],

"budget": 100

}' | jqParameters

| Parameter | Required | Description |

|---|---|---|

robotId | Yes | The robot to use for extraction |

urls | Yes | Start URLs to crawl |

budget | No | Maximum number of URLs to extract. Each URL costs 1 credit. |

Run lifecycle

| Status | Description |

|---|---|

pending | Run is queued for execution |

starting | Run is starting |

running | Actively crawling and extracting |

completed | Finished successfully |

failed | Encountered an unrecoverable error |

stopped | Manually stopped |

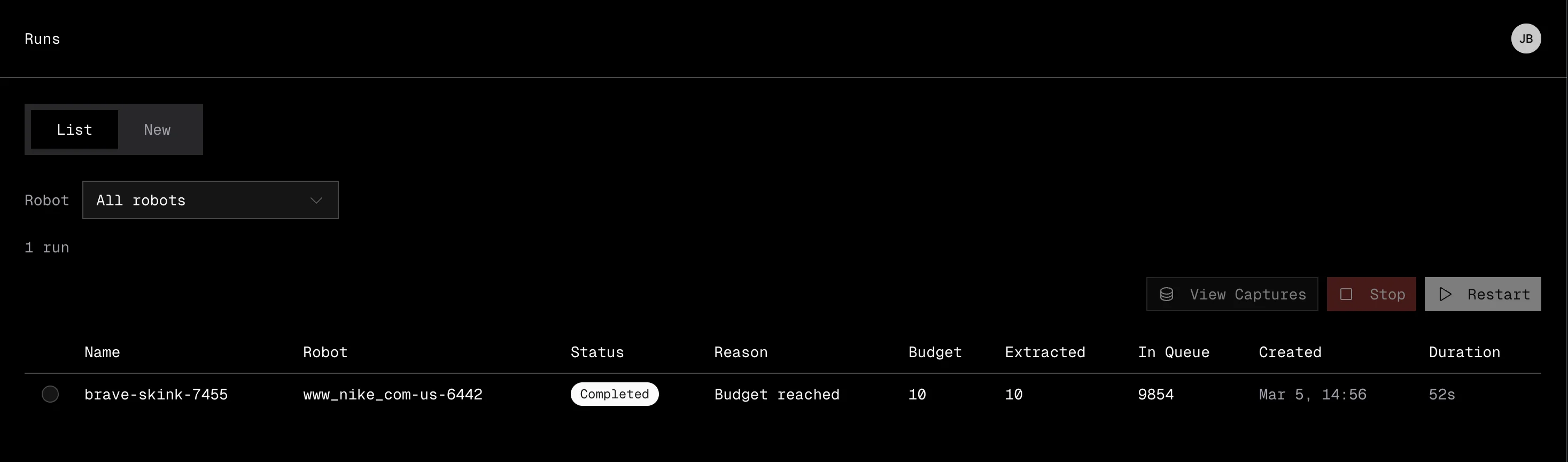

Monitoring a run

• Dashboard

Navigate to Extract > Runs to see all your runs in a sortable table.

The table shows:

| Column | Description |

|---|---|

| Name | The run name |

| Robot | Which robot is executing the run |

| Status | Current status with a color-coded badge |

| Budget | Maximum URLs to extract |

| Extracted | Number of URLs extracted so far |

| Queue | URLs remaining in the crawl queue |

| Created | When the run was started |

| Duration | How long the run has been running or took to complete |

You can take actions on runs directly from the table:

- Stop a running or pending run to halt extraction early.

- Restart a stopped or failed run, optionally with a new budget.

• API

Poll the run endpoint until it reaches a terminal status:

curl -s "https://api.extralt.com/runs/$RUN_ID" \

-H "Authorization: Bearer $EXTRALT_API_KEY" | jqSee Common Patterns for a full polling example.

Concurrent run limits

| Plan | Concurrent runs |

|---|---|

| Start | 1 |

| Scale | Up to 10 |

If you exceed your concurrent run limit, the run will be queued until a slot opens.

Downloading data

• Dashboard

Export captures directly from Extract > Captures. You can filter by run or robot, then download as JSONL or Parquet. See Working with Captures for details.

• API

The download endpoint returns a signed URL to a compressed .jsonl.lz4 file with all captures from the run. The URL is valid for 10 minutes.

curl -s "https://api.extralt.com/runs/$RUN_ID/download" \

-H "Authorization: Bearer $EXTRALT_API_KEY" | jq '.url'Recurring extractions

To automate extractions on a recurring cadence without manual intervention, set up a schedule. Schedules automatically create runs at the interval you specify.

See Schedules for the complete guide.

Scheduling Runs

Automate recurring ecommerce data extractions with schedules. Set cadence, manage credits, and keep your product data fresh automatically.

Working with Captures

Browse, filter, and export extracted ecommerce data from the Extralt dashboard or API. Search captures, view details, and download results.